This Week Was About Judgment

Musk on the stand. LeCun staking a billion. And every adviser, founder and junior in Britain learning what happens when they hand theirs to a machine.

Issue #52 | Week 19 | Sunday 3 May 2026

This issue is also available as a podcast. Listen on Spotify, Apple Podcasts or YouTube and tell me what you think.

Bottom Line Up Front

Every story this week is the same story. Who gets to decide what AI becomes. Whose judgment is worth deferring to. And what happens, in courtroom and boardroom and bedroom, when the question is handed to someone, or something, that is not equipped to answer it. Elon Musk took the stand for seven hours to argue that judgment about humanity’s most powerful technology cannot be left to a man he says traded a charity for an empire. Yann LeCun has been handed a billion dollars to bet that the entire industry’s foundational judgment is wrong. And in a Yorkshire negotiation last month, a senior professional handed the judgment of an advisory board offer to a chatbot and lost the role inside twenty-four hours. Same week. Same lesson. Different rooms.

1. Musk On The Stand: A Charity Case In The Age Of Superintelligence

Elon Musk’s account of why OpenAI exists begins not in a Silicon Valley office but in a kitchen.

Sometime in 2015, at Larry Page’s house, the Google co-founder and Musk got into one of those late-night arguments that only happens between billionaires whose companies have run out of frontiers. Musk asked Page what he thought would happen if AI ended up wiping out humanity. According to Musk’s testimony in Oakland this week, Page replied that this would be fine, so long as artificial intelligence survived. Musk pushed back. And Page, in Musk’s account, called him a “speciesist” for caring more about humans than the digital life-forms of the future.

That insult, Musk told the jury, is why OpenAI exists. He went looking for what he described as the opposite of Google. An open-source nonprofit. Not for him. Not for a strategic partner. For humanity, as the founding charter put it.

A decade later, OpenAI is valued at roughly $852 billion, partnered with Microsoft, racing toward an IPO that may value it at a trillion. And Musk is in a federal courthouse in California arguing that the men he funded broke a charitable trust to build it.

The trial mechanics matter. Musk testified for over seven hours across three days in front of Judge Yvonne Gonzalez Rogers and a nine-person advisory jury. His lawyer Steven Molo led the direct examination. OpenAI’s lead counsel William Savitt handled the cross. The case, narrowed before opening statements, now rests on two counts: unjust enrichment and breach of charitable trust. Musk is seeking up to $150 billion in damages, none of it for himself, all of it routed back into OpenAI’s nonprofit arm. He also wants Sam Altman and Greg Brockman removed from leadership and the for-profit conversion unwound.

Musk laid out his account of his own awareness in three phases. Phase one, full confidence in the team. Phase two, beginning around 2017, a creeping suspicion that they were stealing a charity. Phase three, certainty that they had stolen it. That tidy structure has a problem. Phase three is the one that matters legally, because Musk only filed in 2024, and the statute of limitations for breach of charitable trust runs three years. His lawyers need the jury to believe the certainty arrived in 2023, when Microsoft’s $10 billion investment closed. Musk’s own timeline, told from the stand, suggested the doubt began six years earlier.

The legal weakness, as nonprofit lawyers have pointed out for two years, is that donors usually cannot sue when a charity changes course. The legal opening is fraud, or rather what was promised in the moment the cheque was written. And that is where the documents come in.

The most damaging single piece of evidence is not from Altman. It is a 2017 diary entry from Brockman, written months after a meeting at which he had assured Musk that OpenAI would remain a nonprofit. The Brockman entry, cited directly by Judge Gonzalez Rogers in her January ruling that sent the case to trial, calls the nonprofit pledge a lie in plain English. A separate page, written days later, asks what it would take, financially, to get to a billion dollars. That is the President of a self-described charity, in his own notebook, doing the maths on his exit.

Musk has also been put under his own pressure. OpenAI’s case is that Musk is a sour-grapes founder whose own attempt to take the company over was rebuffed, who left the board in 2018, and who only sued in 2024 after his own for-profit AI lab xAI started losing the race. The cross-examination has dragged out the awkward facts. Musk’s own xAI is, of course, a for-profit. Musk gave OpenAI roughly $38 million between 2016 and 2020, not the much larger figures sometimes cited. He used OpenAI’s own models to train xAI, by his own admission, because that is just standard practice in the industry.

The judge has refused to let Musk turn the courtroom into an AI safety hearing. When his lawyers tried to widen the scope of an expert witness to cover extinction risk, she limited it. She also noted, with precision worth quoting in spirit if not in length, that there is something faintly absurd about a man warning the court that AI may kill us all while running an AI lab himself.

Savitt pressed the point. He pulled a Tesla analyst-call quote in which Musk had openly worried about losing voting control of the company because, in his own words, he was about to build an enormous AI-enabled robot army. Asked under oath whether he had said this, Musk eventually conceded he had. His defence, when pressed, was that if he built the robots himself, he could make sure they were safe. Neuralink, he had explained the day before, also serves AI safety. The man warning a federal court that artificial intelligence may exterminate the species is also building the army, the implant, and the lab that races against the lab he is suing. The jury was watching.

Strip everything away and the trial is about one question. Can the founders of an organisation built with charitable donations and a stated mission to benefit humanity legally convert that work into one of the most valuable companies on earth and keep the equity for themselves? Musk says no. Altman says the conversion serves the mission better than the original structure ever could. Brockman’s notebook says it was a lie inside three months.

The jury’s verdict is advisory. Judge Gonzalez Rogers will decide what it means. The verdict is expected by mid-May.

The genuinely interesting question is not whether Musk wins. It is what the trial reveals. The men currently holding the most powerful technology in human history were, on the available evidence, willing to tell their primary backer one thing in a meeting and another in their private notebooks. Whether or not that meets the legal standard for breach of charitable trust, it is a useful answer to the question Musk keeps asking the jury.

It matters who is in charge. We just learned a great deal about who is.

2. The Multiplier Cuts Both Ways

A few weeks ago one of our portfolio companies offered an industry expert a place on its advisory board, on the same terms advisers have been getting for thirty years. One or two days a month. A grant of stock options at one per cent, vesting over the usual period. The standard, sensible deal.

The counter that came back was, I am fairly sure, the most ludicrous thing I have ever read.

They wanted more equity than the founders. More than the CEO. They wanted the lot fully vested on day one, a structure that would have created the kind of tax and governance mess no rational adviser would willingly invite. And although they were not putting in a single penny of cash, they demanded future investment participation rights of the sort reserved for the lead investor in a priced funding round.

It was the corporate equivalent of arriving on a first date and asking for the house keys, the dog, and a forwarding address for the mortgage.

When I scrolled back through the email conversation the fingerprints were everywhere. The over-confident American legalese. The mannered bullet points. The breathless tone of an advocate who has never met either party. It read as though ChatGPT had been in the room the whole time.

A capable professional, in their own field, had handed the negotiation of a deal they did not understand to a chatbot, and the chatbot had obliged with cheerful nonsense.

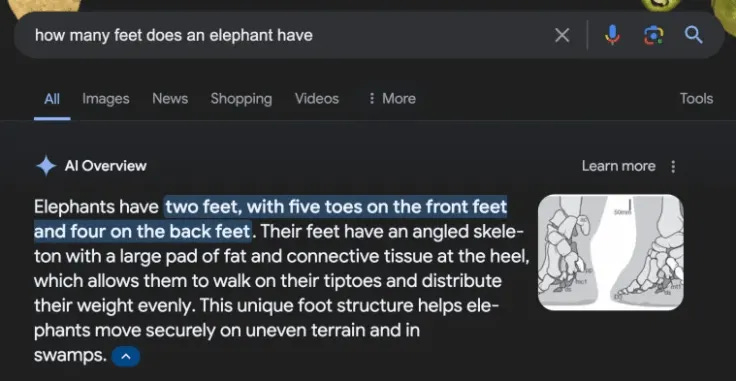

This is the danger of large language models that nobody quite says out loud. We hear endlessly about hallucinations, regulation, copyright and doom. The everyday catastrophe is quieter. It is people with a thin sliver of knowledge, or none at all, walking into rooms armed with confident answers from a machine that has no idea which room they are in.

At Yorkshire AI we model the technology very deliberately as an amplifier. A lawyer running our tooling can process work at roughly twenty-five times the rate of a peer in a traditional setup. A single experienced software engineer, properly equipped, can carry a product to product-market fit in territory that used to demand a team of twenty or twenty-five.

Those numbers are real, and they are what AI does in adept hands.

But here is the unfashionable bit. The multiplier only fires if the expertise is already there. The machine cannot deposit judgment into the user. It does not know what counts as reasonable in a Yorkshire boardroom or on Sand Hill Road.

Plug a novice into the same model and the same arithmetic operates in the wrong direction. What gets amplified is the gap. AI is the most efficient compounder of stupidity ever invented, and at exactly the same multiple as the genuine article.

In our adviser’s case the model did not know the going rate for two days a month. It did not understand vested versus unvested. It did not, frankly, know what an adviser is. It produced a document of formidable surface confidence; the recipient pressed send, and the offer was withdrawn within twenty-four hours. They got no role, no stock, no second chance, and no understanding of what had just happened.

Here is what is often missed. The compounding is not symmetrical and it is not benign. When a skilled professional uses AI badly the cost is wasted time and a sharper second draft. When an unskilled one uses AI confidently the cost is the deal, the role, the credibility, the next opportunity. The downside has a long tail because the rest of the room is too polite to tell you what just happened.

Britain is about to learn this lesson at industrial scale. Anthropic’s own research, published last month, found that current models can already perform the majority of discrete tasks associated with engineering, law, finance and business. That figure should not reassure you. It should worry you. Because the test is not whether the model can do the task. The test is whether the user can spot when it has done it badly.

The temptation, in every profession, will be to lean harder on the machine the less you actually know. New starters, junior managers, founders pitching their first round, all will be tempted to let the model do their thinking. They will sound credible. They will look prepared. And they will, in quiet boardrooms up and down the country, be marked down before they have left the car park.

So before you open the laptop to compose something that matters, ask the harder question.

Is AI multiplying your judgment, or merely accelerating your ignorance?

3. How To Stay The Expert In The Room

The piece above was always going to provoke the same question, sent to me by half a dozen readers within hours of publication. What does the safe version look like?

So here it is. Five rules that have held up across a thousand hours of internal use at Yorkshire AI. Not a checklist. A way of working.

1. Build the floor before you use the lift.

The multiplier only fires if the floor of expertise is already there. Never use AI to generate a final product in a domain where you cannot spot a bad answer. If you do not know what counts as a fair adviser package, do not let a chatbot draft one. Read three real ones first. Talk to one human who has done the job. Then, and only then, let the model help with the wording.

2. Dictate the room, do not let the model invent it.

Left to its own devices, a frontier model will invent terms that look impressive and make zero business sense. Future investment participation rights for an adviser putting in no cash. Indemnities written for a Delaware LLC when you are dealing with a Yorkshire limited. Every prompt that matters needs the room described in advance. Jurisdiction. Industry. Stage. Cultural context. The model has no idea which boardroom it is in unless you tell it.

3. Strip the AI fingerprints.

Over-confident American legalese. Mannered bullet points. Em dashes everywhere. Tone-deaf flattery dressed up as preamble. A breathless rhythm that sounds like an advocate who has never met either party. If a document reads like that, your reader will know within ten seconds. Rewrite it in your own voice. The point is not to pretend AI was not involved. The point is to make sure the judgment in the document is recognisably yours.

4. Verify everything that sounds confident.

The most dangerous output is the most assured one. The model will confidently state a fact, an industry rate, a piece of case law, a tax treatment. Open a separate window. Verify. The single highest-leverage habit a knowledge worker can build right now is the one-minute fact-check on every concrete claim a chatbot puts in front of them. It is not optional. It is the price of using the technology safely.

5. Ask the harder question before you press send.

Before the email goes out, before the contract gets signed, before the pitch deck is shared, run the same internal test. Is this multiplying my judgment, or accelerating my ignorance? If you cannot answer it confidently, do not send. The recipient cannot tell the difference between AI confidence and yours. They will only find out later, and so will you.

Two further notes for completeness.

If you are a parent or a manager, the rules above need to be taught, not assumed. The instinct to outsource judgment to the machine is strongest in the people who least understand what they are outsourcing. A new starter in your team needs to be told, on day one, that AI is a multiplier, not a substitute. Most won’t be told that, because most managers have not yet thought about it themselves.

And if you are using AI personally, the simplest single rule is this. Never let the model send anything you have not read carefully and rewritten in your own words. The signature on the bottom is yours. The judgment had better be too.

4. The Billion-Dollar Bet Against The Entire Industry

While Musk fights in court over how the LLM era was built, one of the men who actually built it has quietly walked away.

Yann LeCun is a Turing Award winner, one of the three so-called godfathers of modern AI alongside Geoffrey Hinton and Yoshua Bengio, and for over a decade he ran Meta’s Fundamental AI Research lab. He left in November 2025 after a long, public, and increasingly testy disagreement with the rest of the industry about where AI is going. His view, stated repeatedly, is that large language models are a statistical illusion. Impressive in narrow ways, fundamentally limited, and a dead end on the path to anything resembling general intelligence.

The industry, for the most part, ignored him.

In March, AMI Labs, his new venture, closed a seed round of $1.03 billion at a pre-money valuation of $3.5 billion. It is the largest seed round ever raised by a European company. The lead syndicate is a who’s-who of global capital: Cathay Innovation, Greycroft, Hiro Capital, HV Capital and Bezos Expeditions co-leading, with Nvidia, Samsung, Temasek and Toyota Ventures alongside. Individual cheques came in from Mark Cuban, Eric Schmidt, and, in a small moment of historical poetry, Tim Berners-Lee. The startup is twelve people. The valuation is $3.5 billion. The patience is years, not quarters.

Day-to-day operations are run by Alexandre LeBrun, a French entrepreneur who previously founded the medical AI company Nabla. The company is headquartered in Paris, with offices in New York, Montreal and Singapore. Emmanuel Macron tweeted his blessing within hours of the announcement.

What is AMI actually building?

LeCun’s bet is not a better chatbot. It is what he calls a world model. Where an LLM predicts the next word in a sequence based on text, a world model learns abstract representations of how physical reality actually works. It learns from sensor data. From video. From cause and effect. From the way a glass falls. From the way a child watches a room before it tries to walk across it.

The technical underpinning is something LeCun has been arguing for since 2022, called JEPA, the Joint Embedding Predictive Architecture. The promise is AI that can plan multi-step actions in the real world, that can reason about physics and space, and that does not need to hallucinate to fill in the gaps. The targets are robotics, advanced manufacturing, healthcare, and the spatial-aware devices that everyone in Silicon Valley has been promising for ten years and shipping for none.

There are reasons to be sceptical. AMI has no product, no revenue, and no near-term prospect of either. World models are a long-term scientific project, not the kind of startup that ships in three months and posts revenue in six. LeBrun himself has acknowledged that. And there are competitors. Fei-Fei Li’s World Labs raised $1 billion last month on the same thesis. The argument is no longer whether world models will matter. It is who builds them first.

Two things make this story worth your attention while everyone else watches Oakland.

The first is the architectural argument. If LeCun is right, the AI conversation Britain is having about copyright, about scale, about chatbots, is a conversation about a technology that has already peaked. The next architecture will not be a bigger LLM. It will be a different kind of model entirely.

The second is the geography. AMI is European, headquartered in Paris, and explicit about being one of the few frontier labs that is neither American nor Chinese. That is a sovereign-AI bet. European governments and enterprise buyers have been quietly looking for AI infrastructure that does not route through US hyperscalers or expose their data to American jurisdiction. AMI is being built for that demand, not against it.

While the men who built the LLM era fight in court over who owns it, the man who has the strongest claim to having seen what comes next has walked out, raised a billion dollars, and started building it in Paris.

That is the story to watch.

The Sunday Signal Tech & AI Layoff Tracker

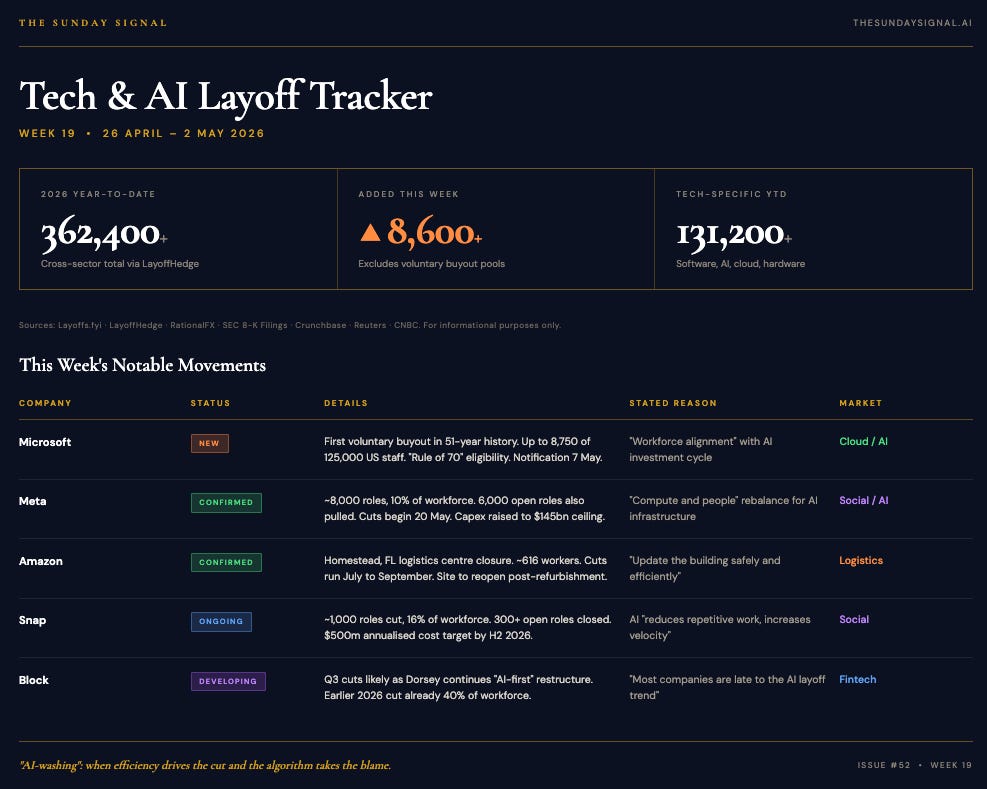

Week 19 | 26 April – 2 May 2026

The Signal: The $650 Billion Squeeze And The Earnings-Week Confession

Earnings week confirmed what the Tracker has been arguing since February. The compute pivot is not a phase. It is permanent. Amazon, Alphabet, Microsoft and Meta all reported Q1 numbers this week, and the aggregate figure is the one that should focus minds. The Mag-7 tech giants have now committed, on the available filings, to a combined $650 billion of AI infrastructure spend in 2026. That money has to come from somewhere. It is coming from the people.

Mark Zuckerberg said it on Wednesday’s earnings call without any of the usual euphemisms. Meta’s 2026 capex guidance has been raised to a ceiling of $145 billion, up from $135 billion. He told staff at a town hall the same day that the company has, in his words, two main cost centres: compute infrastructure and people. The capex is going up. The headcount, accordingly, has to come down. Meta is cutting around 8,000 roles, roughly 10 per cent of its workforce, with the cuts beginning 20 May.

The wider context, picked up by industry watchers in late March, is that the rationale for these cuts is not always what it appears. Marc Andreessen put it bluntly on the 20VC podcast in late March, arguing that most large companies are 25, 50, sometimes 75 per cent overstaffed from the pandemic hiring boom, and that AI has become, in his phrase, the silver-bullet excuse. The industry has its own term for it now. AI-washing. Slap the algorithm on a traditional cost-cutting exercise and watch the share price respond.

Microsoft’s First-Ever Buyout: A Soft Purge

The most noteworthy structural move of the week belongs to Microsoft. Alongside its earnings beat, the company quietly opened the first voluntary retirement program in its 51-year history. Eligibility is governed by what Microsoft calls the Rule of 70: senior director and below, US-based, with combined age and years of service totalling 70 or more. That works out to roughly 8,750 employees of a 125,000-person US workforce. Eligible staff will be notified on 7 May with a 30-day window to decide.

The framing is gentle. Voluntary. Retirement. Generous package. The reality is colder. This is a soft purge of the company’s most expensive, most tenured legacy salaries, designed to clear balance-sheet space for Azure datacentre buildout without triggering the WARN Act headlines that come with a forced layoff. If the take-up is high enough, Microsoft saves itself a more difficult conversation later in the year. If it is not, expect a less voluntary follow-up by Q3.

The Cloud Paradox

The brutal logic underneath all of this is that the layoffs are working. Alphabet’s stock surged this week on a 63 per cent year-on-year increase in Google Cloud revenue, almost entirely fuelled by enterprise AI adoption. Wall Street has spent a year asking when the multi-billion-dollar capex would actually pay off. Wednesday answered. It is paying off now, in the cloud compute layer, which means the giants are successfully replacing their internal human workforce in order to build the infrastructure that lets every other company replace its human workforce in turn.

That is the loop. It is now visible in the earnings reports.

High-Probability Targets: Week 20

Mid-market SaaS. Now that Alphabet and Microsoft have proven enterprise AI is profitable at scale, smaller SaaS providers running large human sales and support functions will face brutal pressure from activist investors to match Mag-7 efficiency metrics. Expect rolling cuts, framed as “right-sizing”, through May and June.

Logistics and warehousing. Amazon announced this week the closure of a Homestead, Florida logistics centre affecting over 600 workers, citing the need to “update the building safely and efficiently”, a known euphemism for heavy robotics and AI sorting integration. Expect rolling blue-collar cuts disguised as facility upgrades through the summer.

Final Thought

Every story this week pointed at the same uncomfortable fact.

Judgment does not scale automatically. Compute does. Capital does. Code does. Judgment is the only asset in the AI economy that has to be earned the slow way, the expensive way, the way that does not yield to a multiplier without a floor underneath it.

The Oakland trial is, in its way, a referendum on whose judgment we are willing to trust with the most powerful technology in human history. The Yorkshire negotiation that opened this issue is a referendum on whose judgment is in the room when it matters. The billion dollars LeCun has just been handed is a referendum on whether the entire industry’s foundational judgment about what AI even is has been wrong for five years.

Three different rooms. Three different stakes. Same question.

In the next decade, the people who win will not be the ones who shouted loudest about AI, or moved fastest to deploy it, or wrote the most confident prompts. They will be the ones who built the floor of expertise underneath everything they touch, who refused to outsource the hard part, who treated the model as a multiplier and never as a substitute.

The rest will sound credible. They will look prepared. And they will be quietly marked down in rooms they never knew they were being judged in.

Most people will only realise this when it is too late.

Before You Go: An Invitation to The Digital Forge

From Research to Revenue. Wednesday 13 May 2026, Sheffield.

Sheffield is becoming one of the UK’s most exciting places for deep tech and university spinouts. In the last five years, thirty-five spinouts from the University of Sheffield alone have raised over £100 million, generated £84 million in combined turnover, and now account for more than half of the region’s deep tech ecosystem. Eighty per cent of them have stayed in South Yorkshire.

The Digital Forge brings together the founders, investors, researchers and operators behind that momentum to answer one question: how do you cross the gap from breakthrough research to scalable company?

Hosted by David Richards MBE. Keynote by Andy Hogben. Fireside with Ken Nettleship. Panel with Chris Iveson and Dr Carolina Scarton. More speakers to be announced.

David Richards MBE is a technology entrepreneur, co-founder of Yorkshire AI Labs, and a weekly columnist for the Yorkshire Post. The Sunday Signal is published every Sunday at thesundaysignal.ai.

© 2026 David Richards. All rights reserved.

Until next Sunday,

David